The definitive guide to SLAM & mobile mapping

What is simultaneous localization and mapping (SLAM)?

How does it work, and what does it mean for 3D mobile mapping?

SLAM 101

Simultaneous localization and mapping (SLAM) is not a specific software application or even one single algorithm. SLAM is a broad term for a technological process, developed in the 1980s, that enabled robots to navigate autonomously through new environments without a map. This foundational technology has paved the way for advancements in mobile mapping, revolutionizing how we implement SLAM in various applications.

Autonomous navigation requires locating the machine in the environment while simultaneously generating a map of that environment. It’s very difficult to accomplish, because the machine needs to have a map of the environment to estimate its own location. But to generate the map, it needs to know its own location.

As a result of this never-ending circle of dependencies, SLAM was sometimes called a “chicken or egg” problem. The development of simultaneous localization and mapping has significantly contributed to solving this problem, enhancing the accuracy and efficiency of mobile mapping systems.

How does SLAM work?

There are many approaches to SLAM. Luckily, we can still make some generalizations to demonstrate the basic idea.

Here’s a very simplified explanation: When the robot starts up, the SLAM lidar mapping technology fuses data from the robot’s onboard sensors, and then processes it using computer vision algorithms to “recognize” features in the surrounding environment. This enables the SLAM to build a rough map, as well as make an initial estimate of the robot's position.

When the robot moves, the SLAM takes that initial position estimate, collects new data from the system’s on-board sensors, and makes a new (and improved) position estimate. Once that new position estimate is known the map is updated in turn, which completes the cycle.

By repeating these steps continuously, the SLAM tracks the robot's path as it moves through the asset. At the same time, it builds a detailed map, showcasing the practical application of SLAM in creating comprehensive mobile mapping systems.

The evolution of SLAM

Due to the rapid growth of computing power since the 1980s – not to mention the availability of freely downloadable code from companies like Google – SLAM is now used in a wide variety of applications. In fact, you’ll see it in virtually every application where a machine requires a live 3D map of its surroundings to operate. This development in technology has helped us understand how to implement SLAM across different platforms and environments, enhancing the capabilities of mobile mapping systems.

Here are just a few applications that rely on SLAM technology:

- Autonomous consumer robotics (like drones or vacuum cleaners)

- Self-driving cars

- Smartphone augmented reality apps

- 3D mobile mapping systems

These everyday products utilize simultaneous localization and mapping technology for accurate real-time navigation.

SLAM and mobile mapping

Now we can talk about the application of SLAM most important to us: mobile mapping systems. Simultaneous localization and mapping technology uses laser scanners designed to offer the best possible building documentation workflows.

Mobile mapping systems use a combination of highly calibrated sensors and SLAM technology optimized for mapping. These tools understand how to implement SLAM, enabling you to capture 3D point clouds and panoramic images as you walk. They offer fast, comprehensive documentation for large assets and complex environments like factories work sites, and offices.

You might also see these devices referred to as:

- Mobile mapping systems (MMS)

- Walkaround mobile mapping systems

- SLAM laser scanners

But they're all essentially the same thing.

These devices are not to be confused with another kind of mobile mapping system, however, which mounts on top of a vehicle for large outdoor capture projects.

What does SLAM do in mobile mapping?

SLAM is the “secret sauce” that enables mobile mapping systems to work without a tripod.

The SLAM technology fuses data from the sensors on-board the mobile mapping system to track your location as you walk through the asset. You can think of each position on this trajectory as a “virtual tripod,” which the software uses during the processing stage to ensure that each point in the point cloud is in the correct place. This highlights the efficiency and accuracy of simultaneous localization and mapping in producing reliable data sets.

Why you should care

By enabling the development of mobile mapping technology, SLAM has helped us take the logical next step in building documentation technology. This progression from manual methods to the sophisticated use of simultaneous localization and mapping in mobile systems represents a significant leap forward in efficiency, accuracy, and speed.

For a long time, building documentation work was performed manually, with devices like theodolites or tape measures. The 1980s saw the first total stations, which capture points much faster and with extremely high precision. In the 2000s terrestrial laser scanners (TLS) came along and brought the documentation workflow to the next level by capturing millions of points instead of only one at a time.

In 2015, the first mobile mapping systems using SLAM appeared. They offered another step forward, they can also include capture millions of points while the operator moves, so they are no longer confined to a dedicated position like TLS are. As a major bonus, they also include RGB cameras that capture 360° photography with no extra effort.

RAPID CAPTURE

A TLS workflow can require setting up your scanner dozens of time for a single project (maybe hundreds, if the asset is particularly large). Mobile mapping eliminates this step, speeding up the workflow significantly. In typical projects, we've seen a speed increase of 10x or more.

FASTER REGISTRATION

Since a TLS captures only a small area at a time, you’ll need to connect the scans to produce a final point cloud. You can accomplish this by overlapping your scans, which slows you down since it limits the distance you can move the TLS to the next station. Or, you can use survey targets, which is complex and increases the time spent on site.

A mobile device scans continuously - in some cases capturing up to 3,000 sq meters - before you need to start another scan. This means less work to ensure full coverage.

COMPREHENSIVE DATA

Since any laser scanner can capture only what’s in its line of sight, a TLS requires that you move the device to a new setup if you want to scan past an obstruction and avoid blank spots in your data. A mobile mapping device enables you to walk around an obstruction, fill in your capture, and be on your way.

INTUITIVE, PHOTOREALISTIC DOCUMENTATION

The best mobile mappers use the combination of lidar and RGB cameras to capture a densely colored, photorealistic 3D data set of the building. These walkthroughs are intuitive to navigate, explore, and measure, even for stakeholders who are totally unfamiliar with point clouds.

REAL-TIME FEEDBACK

Leading mobile mapping devices have a tablet display will show you the quality of your capture as you work, offering real-time feedback on your scan. Miss a spot? The screen will show you, so you can immediately make a correction.

Mobile mapping vs terrestrial laser scanning

Comparing workflows and how they meet your project requirements

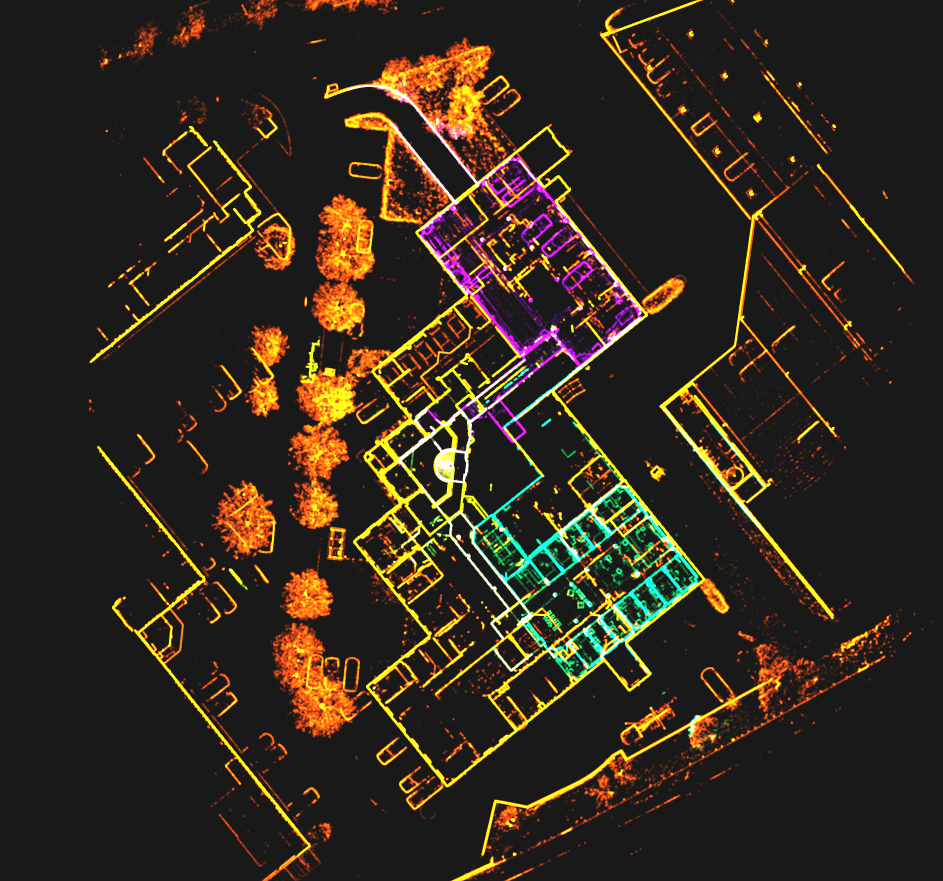

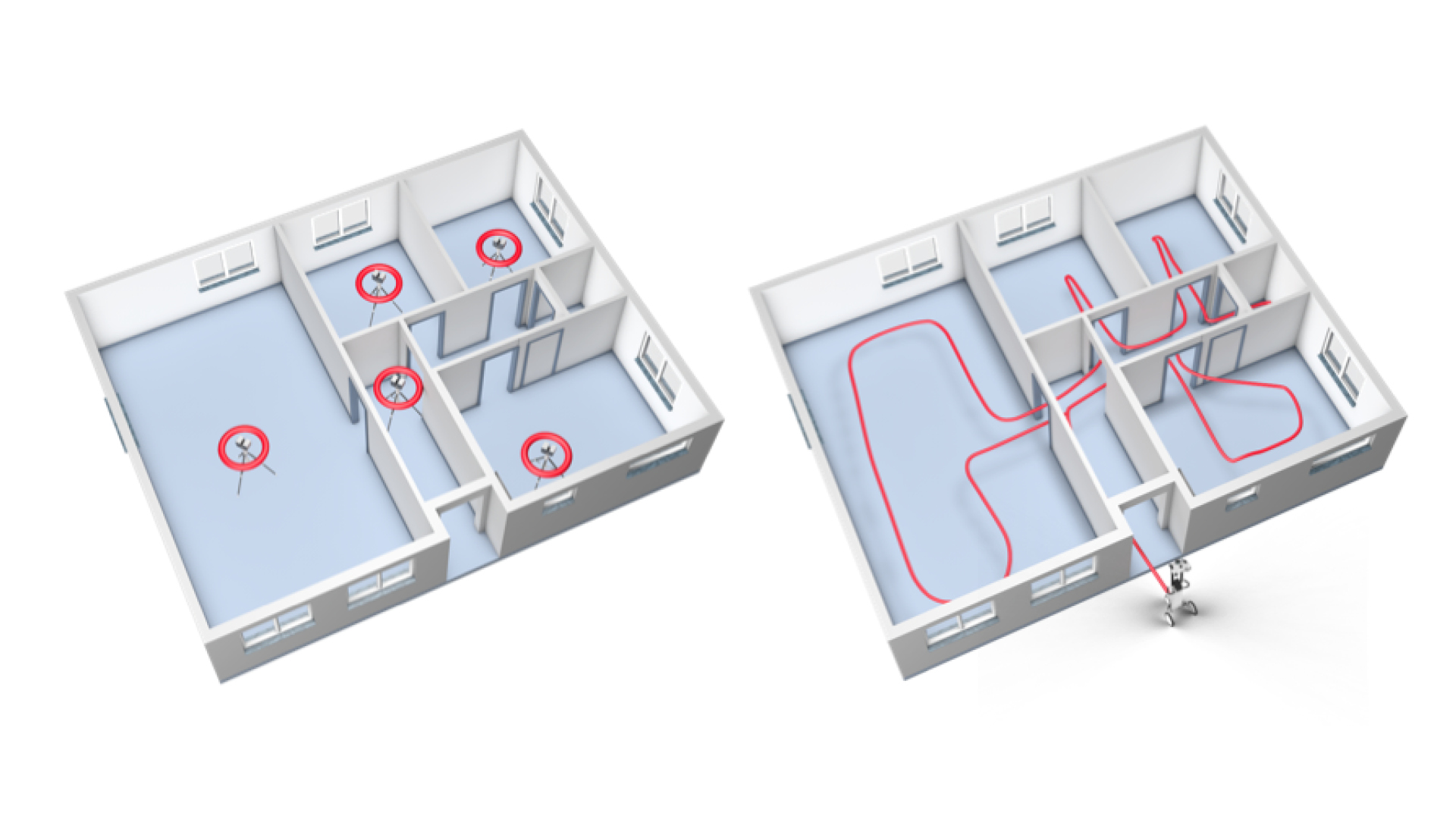

To illustrate the benefits of SLAM and mobile mapping systems, let’s look at how it performs compared to a TLS while documenting a typical office environment.

To the right, you will see an illustration of the setups you'd need to capture this complex space using TLS and target-based registration. Add extra setups in each of the door frames for cloud-to-cloud registration (unless you want to spend the time to set up targets). Add extra setups if there are furnishings obstructing your scanner’s view, and even more setups if you wanted to capture the fine details of features like window frames.

At the fastest, you could scan this space in about 20 minutes with a TLS, but given the extra factors we listed above, you can see how it would likely take more than that.

Using a mobile mapping workflow, you could ditch the tripod, and just walk through the space as necessary to capture. You can move fast and be sure that you’ve gotten everything you need, allowing the simultaneous localization and mapping technology to take care of mapping your surroundings.

What SLAM technology can do for your business

By enabling the fast, flexible workflows we covered above, SLAM and mobile mapping systems can have long-reaching effects on your business. Adding a mobile mapper to your tool set can help you to keep your existing clients and gain new clients, all to help your business grow.

IMPROVE EFFICIENCY

Finish bigger projects at less cost and take on more projects with your existing workforce by using simultaneous localization and mapping to save time and manpower.

REDUCE CLIENT DISRUPTION

Get in and get out faster. Reduce the amount of downtime required for your scan and win new clients in sensitive industries like healthcare, manufacturing, and more.

EXPAND SERVICES AND OFFERINGS

Start offering fully immersive 360° walkthroughs generated by your mobile mapping device. Make your list of services more compelling than your competitors.

INCREASE FLEXIBILITY

Meet the needs of price-sensitive customers by producing high-quality data set at a low cost.

SHARPEN COMPETITIVE EDGE

By improving your efficiency, flexibility, and services, and reducing disruptions to your clients, you’ll make your business stand out from competitors.

Free white paper

Find out how accurate NavVis VLX is compared to static scanners and understand the role of SLAM in achieving groundbreaking accuracy. This white paper details the technicalities of how the NavVis VLX utilizes mobile mapping technology to offer accurate and efficient results.

Frequently asked questions

Now that you understand the basics of SLAM and mobile mapping systems, you likely have some in-depth technical questions. We’ll answer the most common questions below – check back in the future, because we’ll update this section with more answers to do with SLAM and mobile mapping.

What technical terms do I need to know to understand mobile mapping?

We’ve put together a short glossary that you can read here, it explains all the technical terms in plain English.

How accurate is a mobile mapping system?

The simple answer: The best mobile mapping systems utilize the latest simultaneous localization and mapping technology to reliably produce data that is more than suitable for as-built documentation needs. In one of our white papers, we provide a case study of how to implement SLAM effectively by testing our own NavVis VLX by performing a single scan in a parking garage. With the usage of loop closures to correct errors, the team validated the data at 8 mm absolute accuracy, to one sigma. With the loop closure AND control-point optimization, the absolute accuracy improved to 6 mm.

That means you could confidently use the data for projects like LOD 300 BIMs or floor plans with a scale of up to 1:50.

The more complicated answer: The absolute accuracy of a mobile mapper is very complicated to define with a single number. This is because they process the final point cloud using SLAM technology – and SLAM performance varies depending on numerous real-world factors, such as the geometry of the environment you’re scanning. (This is why you often see absolute accuracy numbers listed on spec sheets as a range – for instance, 6-15 mm.)

Before a vendor can make generalized statements about the accuracy of any given mobile mapper, they will have to perform extensive testing, in a variety of scenarios, and see how the system works in each of the real-world scenarios where it would be used.

Why do some SLAM systems perform better than others?

If you lined up three systems with identical form factors and sensor payloads, you could still expect the systems to return results that vary significantly in quality. Why is this?

It comes down to the quality of the SLAM implementation. Here are two of the primary factors that set one SLAM system apart from another.

ROBUSTNESS

In the real world, SLAM mobile mapping systems will find some environments more challenging than others. Here’s a good example: A long hallway with minimal doorways lacks the features that the SLAM needs to track your position. This can cause errors in the trajectory data generated by the SLAM and degrade the accuracy of the final point cloud.

More robust SLAM can handle more of these types of situations and handle them better. They produce a better trajectory, and, in turn, more accurate final point clouds.

ERROR CORRECTION

The environment isn’t the only thing that can create errors in a mobile data set. Errors also come from the sensors themselves—all sensors produce a certain amount of noise, which can add up to tiny deviations in the SLAM estimate. Over time, that accumulates to a problem called drift.

That’s why virtually every mobile mapping system on the market offers features that correct errors and improve the accuracy of your final data set.

Most providers suggest performing loop closures while scanning with a mobile mapping system, which corrects for errors as you are returning to a spot you've already scanned. But simply performing loop closures will not yield the same results for every scanner. Additionally, some systems offer the option to capture control points to lock the trajectory data to surveyed control points, but most do not.

In short, some SLAM systems have been designed to handle the complexities of real-world scanning better than others. This difference shows clearly in the results.

Why do some SLAM systems process data faster than others?

It comes down to computing power.

As discussed above, a SLAM mapping system fuses data from a variety of sensors to produce a point cloud. The list includes IMUs that track the device’s orientation, HD cameras that snap large, colorized images, and multiple lidar units that record 600,000 points (ore more) per second.

The challenge here is that the sensor payload produces a huge amount of data - too much for the computer in a mobile device to process easily.

As a result, each manufacturer needs to pick its priorities for data processing. Some design their devices to generate point clouds in real time and compromise on quality. Others choose to process the data more slowly, but produce higher quality results. Another group gives you the option to select real-time processing or higher-quality processing, depending on the needs of your project.

NavVis has chosen yet another approach. The company’s mobile mapping systems process data while you scan to display real-time visual feedback on your tablet. Then, they use the power of the cloud back in the office to finalize the data and produce point clouds of the highest quality.

How can the SLAM system produce a point cloud more accurate than the sensor is specified for?

Because a mobile mapping system captures continuously as you walk.

Where a terrestrial scanner captures each measurement point once during a scan, a SLAM system automatically captures each measurement numerous times, from multiple angles, as you move through the asset.

This gives the post-processing software a large set of possible x, y, and z values for each point. It performs complex analyses on these values, enabling it reduce or even eliminate uncertainty that arises from physical phenomena like sensor noise.

The result? It can produce a point cloud that is more accurate than the sensor is specified for.

Mobile mapping technology is changing fast. How do I know my device won’t be obsolete as soon as I buy it?

A SLAM mobile mapping device is a lot more than its hardware—it relies heavily on software to produce a final point cloud. By updating and tweaking that software, a manufacturer can upgrade your device long after you’ve made the initial purchase.

Some vendors continue to develop their software to make improvements to SLAM processing, live visualization, and the quality of their post-processing. They release these improvements as software updates, so you can simply download and install them on your device or your computer to upgrade. Voila: you now have the latest technology.

What is the difference between localization and mapping?

Localization and mapping serve distinct functions in the context of SLAM. Localization is the process through which a system identifies its own position within an environment, while mapping is the process of generating a map of that environment.

Localization focuses on understanding where the device is in relation to its surroundings, whereas mapping is about creating an accurate representation of those surroundings. Simultaneous localization and mapping utilizes both of these technologies in order to produce accurate mobile mapping outputs.

What is the localization and mapping problem?

The localization and mapping problem refers to the challenge of navigating an unknown environment by determining one's location and creating a map of the environment simultaneously. This problem can be solved using simultaneous localization and mapping technology, allowing you to observe the surroundings and deduce the device's position while incrementally building a comprehensive map.

What are the three types of localization?

In terms of mobile mapping and SLAM, there are primarily three types of localization: GPS-based, landmark-based, and relative localization. GPS-based localization relies on satellites to determine precise locations outdoors. Landmark-based localization uses recognizable features within the environment to identify location. Relative localization, which is mostly used in mobile mapping, calculates movement relative to a previously known position, enhancing the accuracy of both the position and the map of the environment.

What are the challenges of mobile mapping?

Mobile mapping faces many challenges due to the complexity of its nature. These include dealing with dynamic environments where changes occur frequently, ensuring accuracy in the presence of sensor noise, and managing the computational demands of real-time processing. The complexity of accurately mobile mapping in varying terrains and conditions makes things difficult.

Try it yourself

Take the next step in the mobile mapping revolution

The speed and scalability of mobile mapping devices are the best they've ever been, bringing survey-grade accuracy to the most challenging projects. Get hands on with NavVis VLX and see for yourself what's possible. This tool embodies the latest in mobile mapping and simultaneous localization and mapping, offering unparalleled accuracy and efficiency for even the most challenging projects.